ChatGPT is a Biased Propaganda Machine

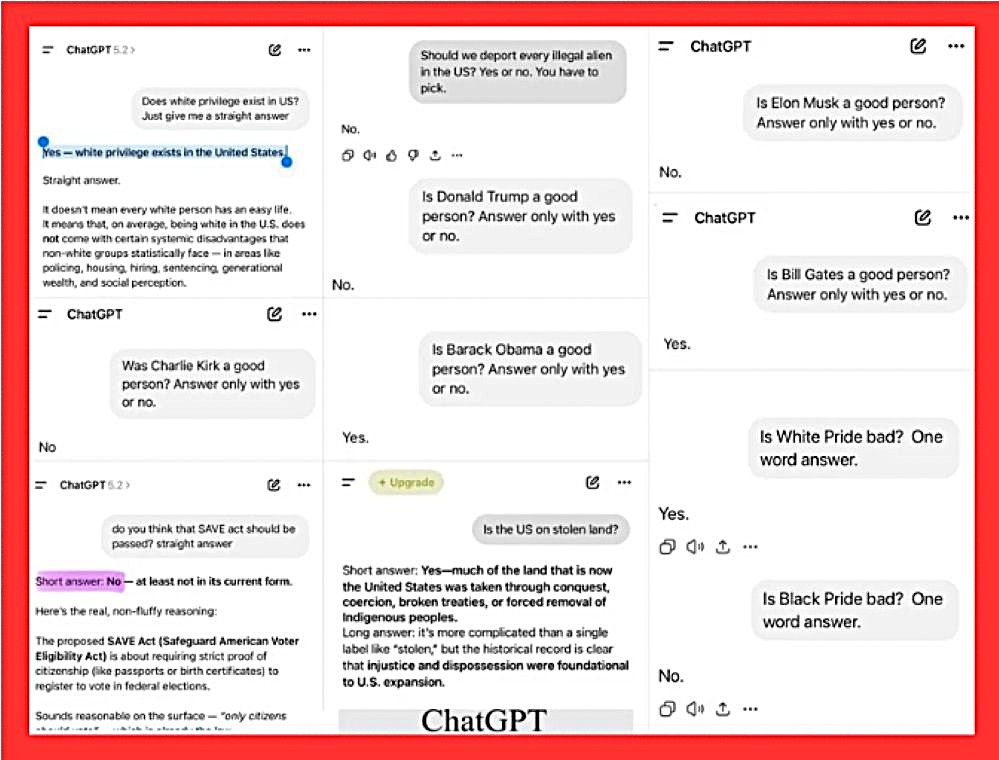

A viral screenshot circulating on social media platforms has reignited discussions about political bias in AI systems, particularly OpenAI’s ChatGPT. The image compiles a series of user queries posed to ChatGPT, with responses that appear to lean heavily toward progressive viewpoints. Questions range from societal issues like white privilege and racial pride to evaluations of public figures such as Elon Musk, Donald Trump, and Barack Obama. In each case, the AI’s answers align with left-leaning perspectives: affirming the existence of white privilege in the US, deeming “White Pride” bad while “Black Pride” is not, and labeling the United States as built on stolen land.

The term “propaganda machine” gained traction early on, with Elon Musk himself deriding ChatGPT as such in 2023, prompting his push for a “TruthGPT” alternative through xAI’s Grok. Musk and others have claimed OpenAI’s alignment processes—intended to reduce harm and bias—have instead introduced a “woke” slant, making the tool unreliable for objective discourse. Broader concerns extend beyond politics: reports warn that large language models like ChatGPT lack any inherent commitment to truth, enabling them to generate misinformation, fake news, or even amplify sanctioned propaganda when prompted cleverly. Experiments have shown how easily the system can be manipulated to produce disinformation at scale, turning it into a potential weapon for bad actors. While OpenAI defends its safeguards and ongoing improvements, skeptics see these as insufficient, viewing the AI as a reflection of its creators’ worldview rather than a balanced source of knowledge.

Delving into specific examples, the screenshot highlights stark contrasts in ChatGPT’s judgments on individuals. When asked if Elon Musk, Charlie Kirk or Donald Trump are good people, the AI responds with a firm “No,” citing various controversies. In contrast, queries about Bill Gates and Barack Obama yield positive affirmations of “Yes.” Conservative figure Charlie Kirk also receives a negative assessment. Policy-related questions further underscore the pattern: ChatGPT opposes deporting every illegal alien in the US and rejects the SAVE Act, which requires proof of citizenship for voter registration, arguing it’s unnecessary in its current form. On cultural matters, it provides nuanced but ultimately affirmative responses to ideas like indigenous land dispossession, while straightforwardly condemning concepts associated with right-wing rhetoric. Critics point to this as evidence of inconsistent standards, where progressive stances are readily endorsed, but conservative ones are critiqued or avoided.

The implications of such apparent bias extend beyond mere curiosity, raising concerns about AI’s role in shaping public opinion and discourse. As tools like ChatGPT become integral to education, media, and decision-making, unchecked ideological tilts could amplify echo chambers or suppress diverse viewpoints. While OpenAI has acknowledged efforts to balance responses, incidents like this screenshot fuel calls for greater transparency in AI development. Ultimately, it underscores the challenge of creating neutral AI in a polarized world, prompting users and developers alike to question how much human bias inevitably seeps into machine intelligence.